Historian Architectural Planning

Overview

Corporate and Plant Network and System Architectural design is a large and complex undertaking.

In this document, we seek to slice of the piece of the architectural pie out of the context of the whole and discuss the individual elements associated with Historians. It should be noted that this is not a normal approach as the design of the whole system will likely affect the design of the individual systems and how they are deployed. However, this discussion will provide insight into the product (in this case the Historian) in a unique and valuable way.

Architectural Challenges

From a technical perspective, similar to most Windows software all functionality that a Historian needs can be installed on one OS.

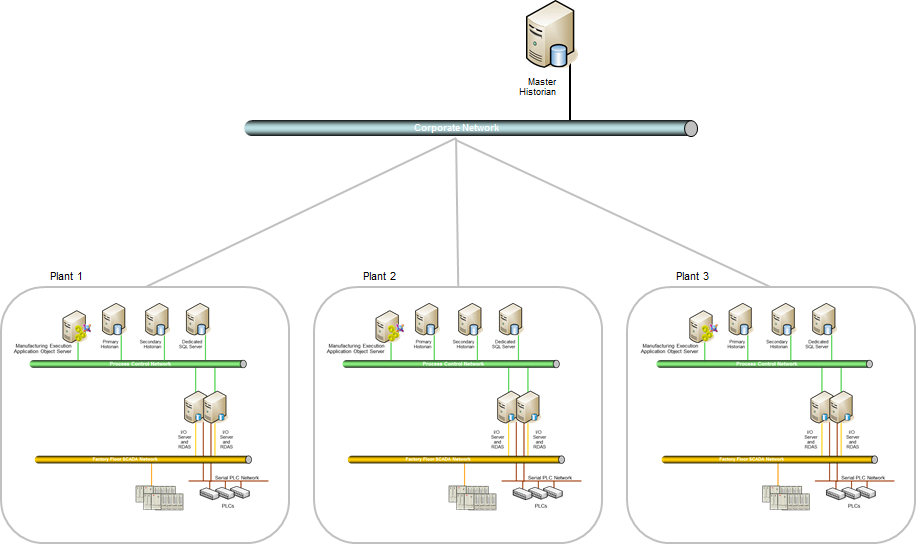

From an architectural perspective the Historian can be deployed fully or partially on multiple systems.

The reasons to spread functionality are typically related to security, ease of maintenance and upgrades, performance, and failover.

These concepts are more easily explained if we follow a typical growth path starting with one Historian in one plant and growing to a multi-plant distributed network with Master, Primary, and Secondary Historians.

Many companies start with one Historian. It is also common to install the relevant IO server(s) (OPC, DDE, Suitelink, DA, etc.) on the same box. This works well but over time but as usage and reliance on the data increases, certain architectural flaws will become evident.

Many companies start with one Historian. It is also common to install the relevant IO server(s) (OPC, DDE, Suitelink, DA, etc.) on the same box. This works well but over time but as usage and reliance on the data increases, certain architectural flaws will become evident.

Common Historian Architectural Flaws

- SQL Server Overrun: Data Integrity, data loss, or performance issues:

The Historian uses SQL Server as its base component. It is also typical for companies to use the SQL Server relied upon by the Historian functionality for other transactional systems as well. Over time the processing load may increase such that both the SQL Server and the Historian response times are too low. If the SQL Server transaction processing becomes too high the Historian can start losing data intermittently.

- Maintenance: Loss of data:

Any reboot or lockup or maintenance required to the server will likely result in data loss for the interim time period. Effectively, the server and/or the relevant locally installed IO server(s) cannot be updated or upgraded without loss of data.

- OS or Hardware System Failure: Loss of data:

OS blue screens, hardware failures, disk full, etc. will all incur loss of data.

- Corporate Standards Issue: Inability to standardize multiple plant data measurement and nomenclature.

Many companies have one or more historians at each plant. They desire to rollup and/or standardize this data for various regulatory or internal reporting. Unfortunately, every plant is likely to use differing nomenclatures, different configurations, and contain plant unique data not easily filtered from corporate requests.

Resolution

Disclaimer

It should be noted that this discussion includes network segmentation but does not address the many significant and complex issues (and potential recommendations) associated with cyber security.

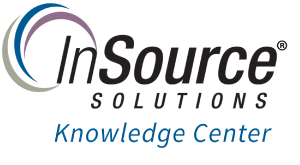

1. IO Server Redundancy and Failover

The first architectural item on the agenda for any plant is to provide for IO server failover and redundancy. As part of this step any IO server installations on the Historian will be removed. The Historian is instead configured to use a primary IO server and will automatically use a secondary IO server in the event of IO server failure.

2. Process and PLC Data Network Segmentation

The second architectural item is to isolate all PLC traffic on its own network fronted only by one or more IO servers. This will improve and help maintain the data integrity and performance of the network used by the Historian. It will also offload any IO network processing from the Historian to allow it to focus on its primary data collection tasks. There are many other reasons for this network segmentation but furthers discussion is outside the scope of this document.

3. Historian Data Collection Failover

The third architectural item is to provide a method for Historian failover for data collection. The Wonderware data collection engine of the Historian can be installed remotely as a secondary failover point. The remote engine is typically referred to as an RDAS node. A good target for RDAS installation is one of the redundant IO servers.

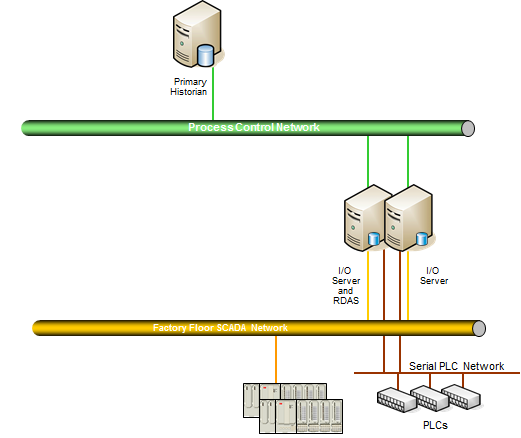

4. Dedicate a SQL Server for non-Historian transactional data

The fourth architectural item is to offload standard SQL processing to a dedicated SQL Server. SQL Server processing can reach peak thresholds such that it prevents the Historian from reliably collecting data.

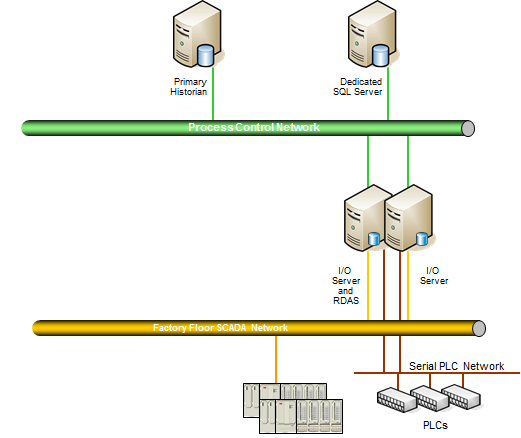

5. Install a Second Historian

The fifth architectural growth pattern is to install a second Historian to enable redundancy and failover in both data capture and data storage. This approach is typically an architectural growth pattern associated with Archestra object data historization. This approach can be combined with RDAS for a third level of redundancy or can replace the need altogether for RDAS as a secondary failover point.

6. Install a Master Historian

The sixth architectural item is the installation of a master historian. In a multi-plant environment this provides companies with an automated way to standardize tag nomenclature and aggregate data from multiple disparate historians. The master data storage is typically used as the final master data repository for analysis, regulatory storage, and corporate reporting.

7. Virtualize

The seventh architectural item is a recommendation to virtualize the mission critical servers and workstations in the plant environment. Among a great many benefits (outside the scope of this document), this will greatly assist in assuring recovery of key systems. The top priority for virtualization in this discussion hierarchy would the IO servers.

This concludes this discussion. It should be noted that these steps imply a sequence but the sequence is merely common, not mandatory.